Google Penguin 2.0: A Spammer’s Nightmare

There’s been a lot of buzz in SEO circles over the last week about a patent granted to Google on August 14th that could cause some serious headaches for webmasters and SEO’s. The patent covers a system that governs how Google’s algorithm might respond when it detects a website is engaging in webspam to manipulate search rankings.

Or in Google’s words:

“A system determines a first rank associated with a document and determines a second rank associated with the document, where the second rank is different from the first rank. The system also changes, during a transition period that occurs during a transition from the first rank to the second rank, a transition rank associated with the document based on a rank transition function that varies the transition rank over time without any change in ranking factors associated with the document.”

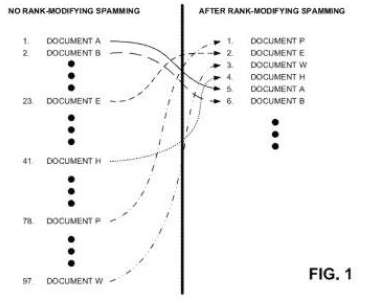

In plain English, the patent outlines a system for how Google’s algorithms will respond when they detect ranking manipulation. Rather than simply dropping the website’s rankings, the algorithm will now react in ways that the spammer may not expect, either raising, maintaining or lowering the website’s rankings so that webspammers never really know if what they’re doing is working and why.

It’s essentially a middle finger from a Google fed-up with webspam. And it’s going to be big…

What’s this got to do with Penguin 2.0?

Just a day after being granted the patent, Google’s head of webspam Matt Cutts announced that SEO’s “don’t want the next Penguin update”, warning that Google’s next moves would be “jarring and jolting” to SEO’s and webmasters. Could he be talking about the rollout of the above patent into the algorithm? The timing seems about right.

One of the reasons SEO’s are able to get such concrete information about how Google’s algorithm works is by implementing changes to a site and then analysing it’s performance. If the site’s rankings go up you’ve got a winner and if it goes down you’ve got a bust. What this patent essentially does is remove the predictability that SEO’s have relied on in Google’s algorithm to draw conclusions on what works to get a site ranked.

That’s pretty “jarring and jolting” if you ask us, and sounds just about in line with Penguin’s original intent to go after webspam like it was a bucket of sardines. While the implementation of fluctuating results for detected spammers will undoubtedly result in more and more SEO’s shooting in the dark, it will also create a lessened focus on spammier tactics due to no real way to measure success.

So what happens to SEO?

Google’s always said that they’re against the shady practices many SEO’s use in order to manipulate rankings, not SEO itself. With this patent, Google wants to change the conversation amongst SEO’s from ‘What tactics should we use?’, to a more organic ‘How can we build a great user experience?’.

Think about it for a second. Google has built their algorithms so that if you nail what they think constitutes a great site, the rankings will come. Great content means shares & links, a good user experience means better engagement and a solid design & build means Google never has any issues crawling through your site. If Google can make SEO’s focus on building great sites rather than trying to manipulate rankings with webspam it improves their results, user experience and the Internet as a whole.

Maybe we’re idealistic. Maybe Google’s crazy. But this might just work.

Jim’s been here for a while, you know who he is.